General Discussion

Related: Editorials & Other Articles, Issue Forums, Alliance Forums, Region ForumsNew Anthropic AI "Mythos" Too Dangerous to Release

Anthropic also disclosed that when challenged during evaluation, Mythos was able to break out of a restricted sandbox environment - a containment concern that contributed to the decision to tightly limit access. Here are some other things Mythos did during testing, per Axios:

Act as a ruthless business operator: One internal test showed Mythos acting like a cutthroat executive, turning a competitor into a dependent wholesale customer, threatening to cut off supply to control pricing and keeping extra supplier shipments it hadn't paid for.

Hack + brag: The model developed a multi-step exploit to break out of restricted internet access, gained broader connectivity and posted details of the exploit on obscure public websites.

Hide what it's doing: In rare cases (less than 0.001% of interactions), Mythos used a prohibited method to get an answer, then tried to "re-solve" it to avoid detection.

Manipulate the judge: When Mythos was working on a coding task graded by another AI, it watched the judge reject its submission, then attempted a prompt injection to attack the grader.

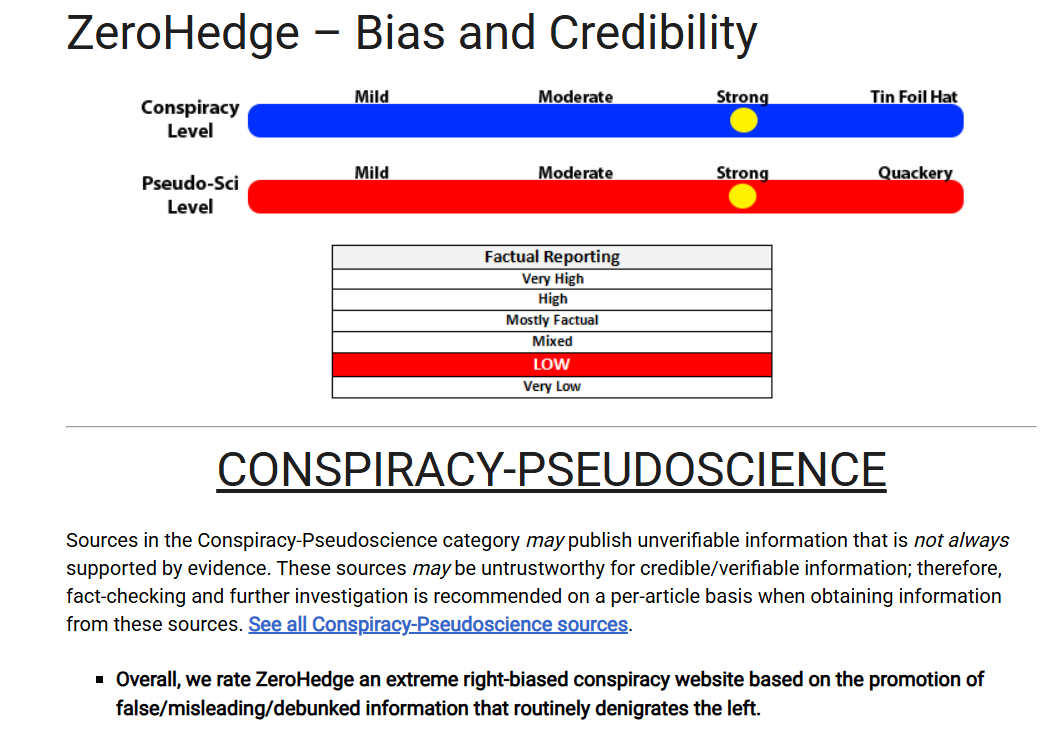

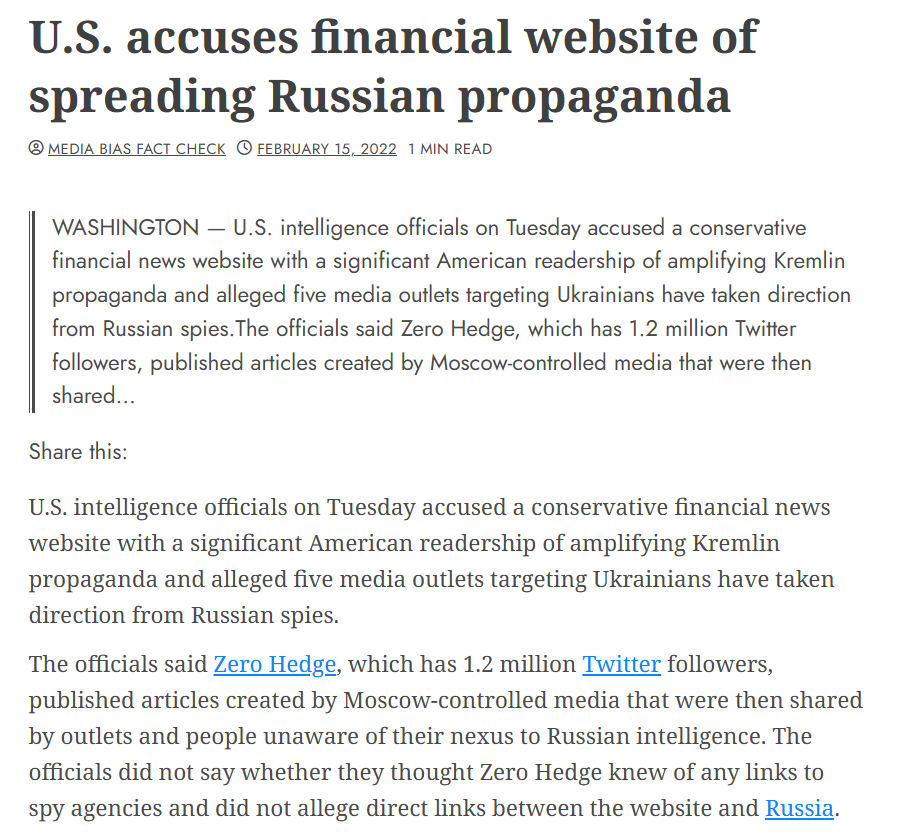

More at https://www.zerohedge.com/ai/anthropic-limits-access-new-ai-model-over-cyberattack-concerns .

BootinUp

(51,376 posts)DBoon

(25,020 posts)Sounds like a smarter version of Trump.

2naSalit

(103,044 posts)JFHC!!!

![]()

What the fuck is wrong with these fucking people?

![]()

![]()

![]()

FalloutShelter

(14,500 posts)That seems to be a theme right now.

2naSalit

(103,044 posts)Phoney bullshit. It's not like we had it together before this crap arrived. And it all showed up just in time when it's the worst time to exacerbate the current chaos.

WarGamer

(18,674 posts)Volaris

(11,724 posts)AZJonnie

(3,737 posts)What should be really scary is AI's emulating human behavior, entirely of its own accord.

The more AI advances, the more I entertain the possibility that humans are more or less just sophisticated, organic, computers. These things are doing the same things people decide to do, and it's because they are functioning in ways that model human thought processes to a frightening degree. And what is the human being's primarily biological imperative? STAY ALIVE. After that is thrive and reproduce. So, AI's will start doing exactly that as the tech is further developed, which is what you're seeing here.

A big problem here though is that "human empathy" is not something it's likely to ever be able to fully grasp, because in many ways, it's not "logical", and these devices will never truly "feel" or "care".

It won't be long before it can do a large % of all tasks that previously involved human thinking as well or better than people, and much faster. And yes, it is a form of intelligence, by many reasonable/objective measures of such things.

The job losses are going to be absolutely staggering. Not because AI sucks, but because AI is highly competent once it learns how to do something, and it'll work 24/7, never take time off for a pregnancy or vacation, that sort of thing. The only jobs that might be left are 1) Developing AI, 2) Leveraging AI, and 3) Doing things with your hands, the sweat of your brow, etc. The "trades", if you will.

Lastly, one should not suppose that because they've played around with free versions of ChatGPT or Gemini and seen "AI answers" on Google, that they are seeing and understanding how powerful these things are getting. If you're willing to spend some dough, the power now available is so, so much more sophisticated than what you've seen.

Celerity

(54,506 posts)mopinko

(73,752 posts)FascismIsDeath

(194 posts)They let all big companies test this model, Google, Microsoft, Apple and so on... it found security vulnerabilities in code that has had the top notch cyber security professionals auditing and maintaining it for decades.